A critical principle often taken for granted in product design is the concept of mental models. These ingrained understandings of the world around us, shaped by our experiences, govern how we use software. Think about the last time you used Google or Apple Maps. You expect to type an address, see a map, and get directions. All of this taken from your real world experience. If Google Maps fails to meet these expectations, it clashes with your mental model. Generative AI products are unique because they often interact with users in ways that feel more human-like than traditional software. This makes users' expectations and mental models even more critical. If the AI's behavior clashes with these models, users can become confused, frustrated, or even distrustful.

What are Mental Models?

Mental models are internal representations that people create to understand how something works. They are based on previous experiences, assumptions, and learned knowledge. These models help users predict how to interact with a product and what to expect from it. For instance, when you see a door with a handle, your mental model tells you to pull it to open. (Unless it's one of those doors, in which case your mental model is probably screaming in frustration.) Mental models can evolve and adapt as users gain more experience.

Why Are Mental Models Important in UX Design?

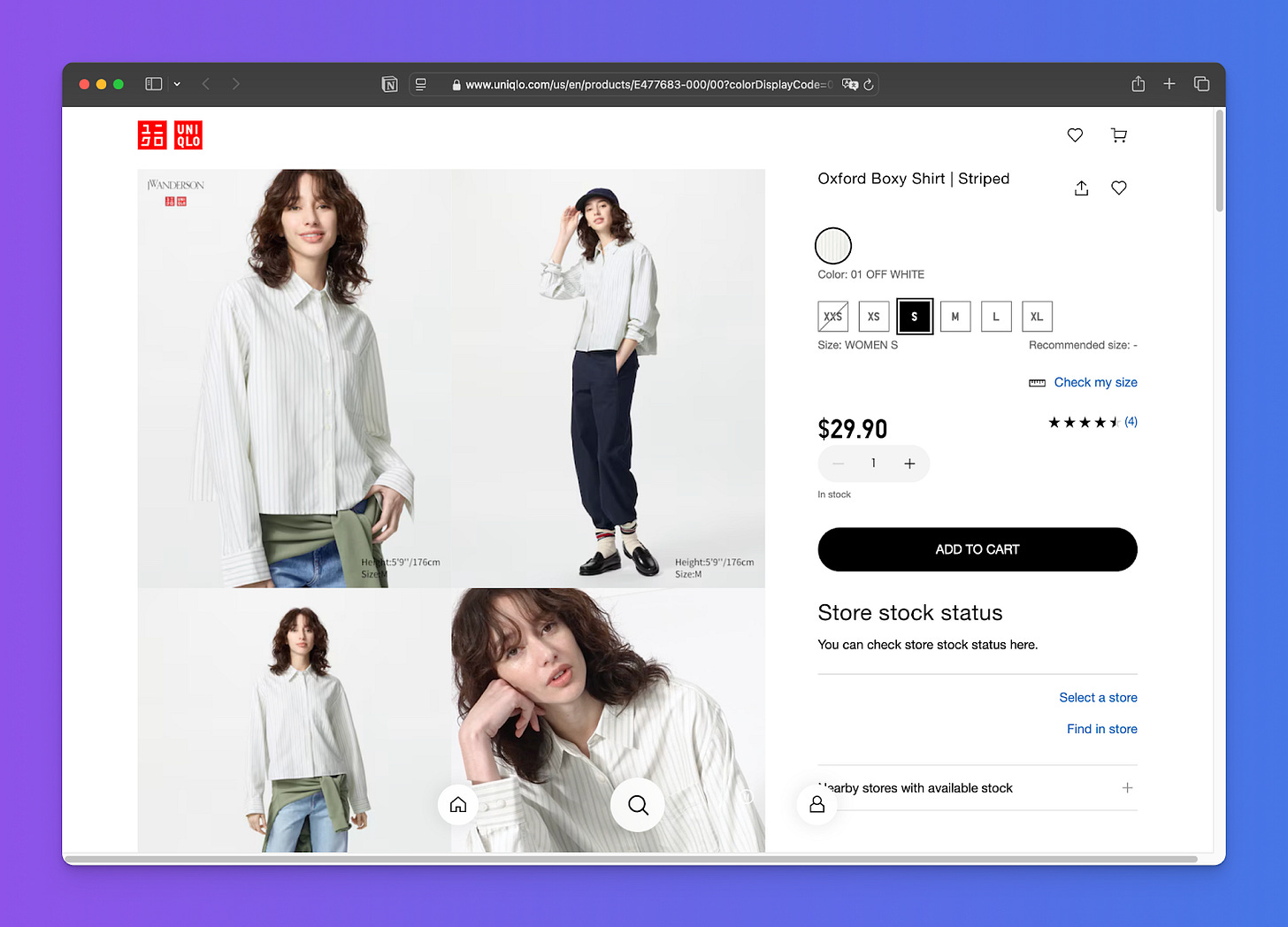

Aligning designs with users' mental models leads to more intuitive and user-friendly products. When products match users' mental models, they are perceived as easy to use, reducing errors and increasing user engagement. For example, the design of an e-commerce website leverages users' mental models of shopping in a physical store by using familiar actions and elements like browsing, a shopping cart, and a checkout process.

Mismatches between mental models and a product's design can lead to user frustration and product abandonment. Imagine a website where the "add to cart" button is replaced with a less familiar icon. This mismatch can confuse users and hinder their ability to complete their purchase.

How Generative AI is Reshaping Mental Models

Generative AI allows users to directly interact with AI models, which requires a shift from thinking of AI as a tool to thinking of it as a collaborator. This shift can change users' mental models of how they interact with technology. Generative AI can create more dynamic and personalized experiences because the AI adapts to user input. This adaptability requires designers to consider how users' mental models will evolve as they interact with the AI.

The shift from deterministic systems to non-deterministic systems, where the AI's output is not predictable, requires designers to adopt new approaches that facilitate users in understanding and working with the AI's ability to generate unique and occasionally unexpected outputs. Designers should strive to create interfaces and interactions that make the AI's processes more transparent and predictable where possible, and provide clear explanations for unpredictable results.

Challenges and Risks of Ignoring Mental Models in AI Design

Ignoring mental models in AI design can lead to several risks:

Reduced user trust: If the AI's behavior doesn't align with user expectations, it can erode trust in the system.

Increased user errors: Mismatched mental models can lead to users making incorrect assumptions about how the AI product works, resulting in errors.

Product abandonment: Frustration and confusion caused by misaligned mental models can lead users to abandon the product entirely.

AI's dynamic nature presents unique challenges. Designers need to consider how to communicate the AI's capabilities and limitations to users in a way that aligns with their mental models.

Designing AI Products with Mental Models in Mind

Creating AI native or embedding AI into existing products is not just slapping an AI model onto an existing interface; it requires a fundamental shift in how we think about user experience. We're dealing with a technology that adapts and can surprise us with its output. We need to guide users through this new landscape, setting clear expectations, facilitating understanding, and empowering them to achieve their goals. Here are a few best practices that can help us build generative AI products that are not only functional but also delightful and intuitive to use.

Set Expectations: Build on existing mental models and communicate the AI's adaptability and capabilities.

Onboard in Stages: Set realistic expectations early and introduce features gradually to allow users' mental models to adapt.

Plan for Learning: Connect user feedback with personalization and allow users to see how their actions impact AI outputs.

Account for User Expectations of Human-like Interaction: Clearly communicate the algorithmic nature of AI and set realistic expectations for human-like interactions.

Transparency: Indicate when AI is involved and provide explanations when possible.

Guided Prompting: Provide clear instructions and support for prompt refinement to help users interact effectively with the AI.

Prioritize User Goals: As always, focus on meeting specific user needs and goals and measure success based on user outcomes.

Examples of AI Products and Their Mental Models

Generative AI products thrive by aligning with users' existing mental models, creating experiences that feel intuitive and natural. Here are some examples illustrating how this works:

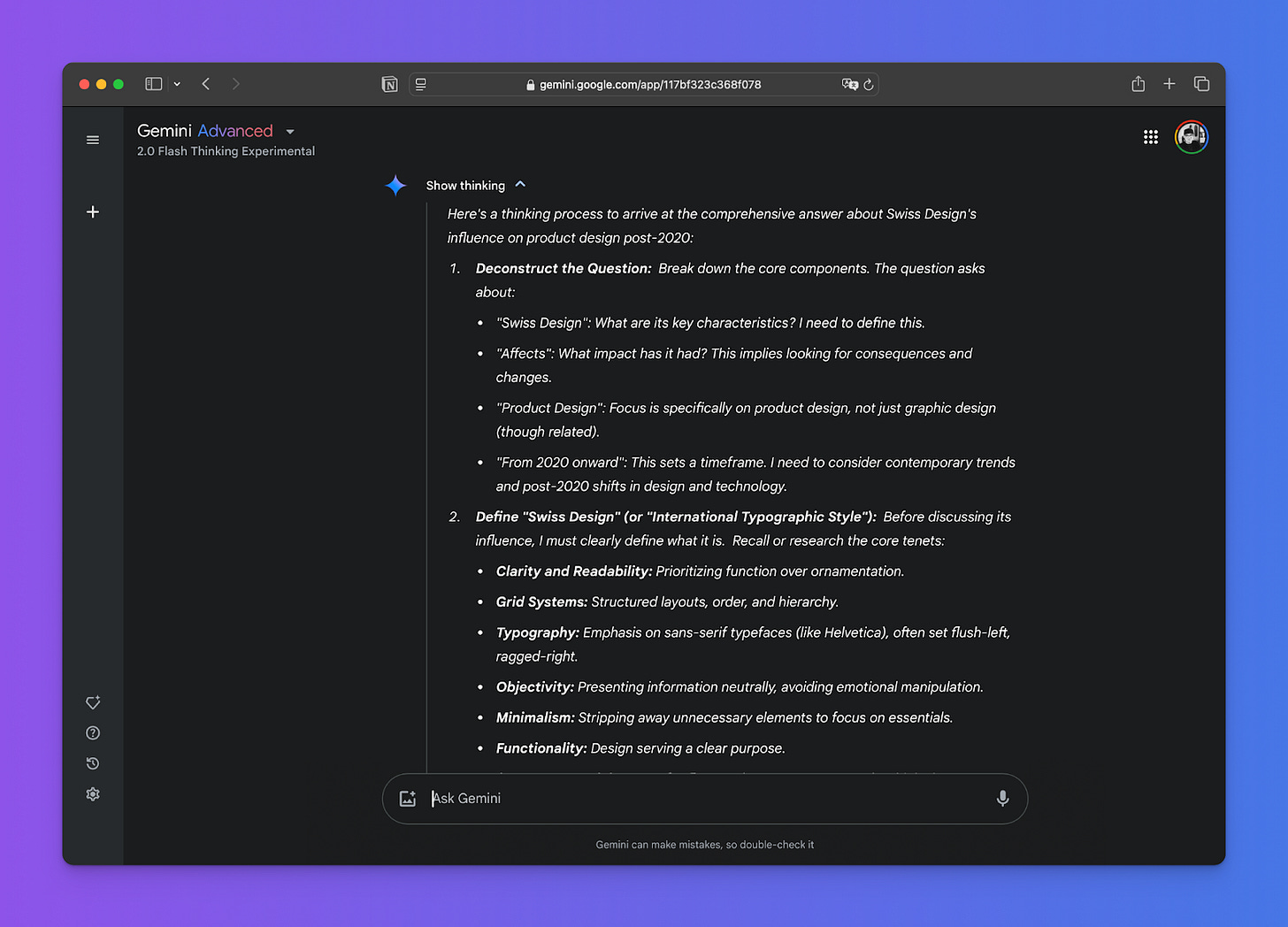

Chatbots: Software like ChatGPT and Gemini often tap into users' mental model of human conversation. Users expect chatbots to understand natural language, respond appropriately to questions and requests, and maintain a coherent dialogue. A well-designed chatbot doesn't require users to learn a special command language; they can simply type or speak as they would to another person. For example, instead of typing "/get_weather_city=London," a user can simply ask, "What's the weather in London?" The chatbot leverages the user's mental model of asking a question to understand the intent and provide the answer.

Image Generation Tools: Midjourney, Dall-E, Imagen and others rely on users' mental models of visual concepts and how they relate to language. People have an understanding of what a "red apple" looks like, and they expect the AI to translate that textual description into a corresponding image. The tool leverages this mental model to interpret the prompt and generate a relevant visual. Additionally, more advanced prompts might involve combining concepts ("a red apple on a wooden table in a sunny room"). The AI needs to understand the relationships between these concepts, mirroring the user's mental model of how these elements would combine in a real-world scene.

Music Generators: These tools often tap into users' mental models of musical structure, genre conventions, and emotional expression. A person might have a mental model of what a "jazz blues" piece sounds like, including its characteristic chord progressions, rhythms, and instruments. The AI can then use this mental model to generate music that conforms to those expectations. Similarly, a person might describe the desired mood of a piece ("something upbeat and energetic"), and the AI can leverage its understanding of musical elements associated with those emotions to create a fitting composition.

In each of these examples, the success of the generative AI product hinges on its ability to leverage and align with the user's existing mental models. By doing so, the AI becomes a more intuitive and helpful collaborator, rather than a confusing or unpredictable tool.

As generative AI continues to evolve, its success will depend heavily on how well we integrate it with our existing mental models. By understanding this and designing AI experiences that align with users' understanding, we can make the technology useful in real world scenarios. This isn't just a UX concern; it's a fundamental principle for creating AI products that are intuitive, trustworthy, and ultimately, beneficial to humanity. Prioritizing user mental models is not just good design, it's essential for creating a future where humans and AI collaborate seamlessly and effectively.

It’s very easy to understand a mental model with examples. I'm also excited and curious about how current icons and buttons, which are familiar to users, might evolve. A great thought to consider!

I've realized that designing AI-powered apps requires us to consider numerous ways to help humans leverage these applications effectively. To achieve this, it would be beneficial to learn both human psychology and AI capabilities. I think my next step is to consider how to integrate these principles into my portfolio